I have been working in the quality field for about 10 years now and just recently did I discover a rule of thumb that changed the way I view quality improvements. The penny dropped for me when I came upon When to Use Statistics, One-piece Flow, Or Mistake-Proofing to Improve Quality, by Michel Baudin.

The author has a wealth of experience, and I thoroughly enjoyed his 3 books about the ‘nuts and bolts’ of assembly operations, logistics, and working with machines.

Let’s look at the 3 major quality improvement approaches and when they make sense.

1. Where statistical tools bring value

When a process is very complex (e.g. semiconductor wafer fabrication, paint in an automotive plant…), it can be hard to fine-tune it into making consistently good products.

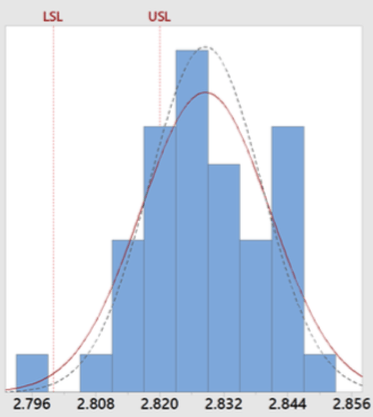

If the parts coming out of a process are not consistently within specifications (see an example below), and you have no idea what is causing it, statistics can probably help.

Statistical tools such as DOE (Designs Of Experiments) help engineers find an optimal set of parameters, based on a few controlled variables. Without specialized training, one can easily get lost in the many options:

.png?width=870&name=Statistical%20tools%20such%20as%20DOE%20(Designs%20Of%20Experiments).png)

Aside from DOEs, some tools can help confirm (or invalidate) whether a change in a process input or variable resulted in a change in process output – for example, did the average move in the right direction, or was the standard deviation reduced? These can be quite helpful, too.

David Collins, co-founder of our firm, is a strong advocate for statistical process control. And there is a reason for it. He spent years of his professional life working on automotive paint which is a very complicated process, and it can cause severe cost overruns if it's not controlled very well.

2. Where process layout changes are the logical next step to improve quality

Now let’s say you operate a much simpler process. The author takes the example of filling a part with 1 lb of oil.

The main issue here is operator mistakes – not filling one part, filling it twice, not waiting until a part is filled up, etc.

The main culprit is the way the process is set up – as a batch processing operation. In most Chinese factories, the parts are presented in a batch to operators. There are several issues with this:

- The workers have to use their memory and remember which ones are already full and which ones are empty.

- If issues are found at the next process, the feedback might not come immediately because of a buildup of inventory. We often see factories that have to sort out through 2 weeks of production for this exact reason.

- If strict FIFO (First-In-First-Out) is not observed, a number of other issues can pop up – difficulty in finding parts from a bad batch, aged inventory, and so forth.

This is typically under the production department’s responsibility. And yet, very few Chinese production managers are aware that the layout has a direct impact on quality!

The appropriate solution is to present and process the parts one by one, always in a FIFO sequence. Go/no go gauges can be used to catch the most likely issues immediately.

The author writes:

It is well known that quality improves as a result of converting a sequence of operations to a U-shaped cell. The proportion of defectives traced to the operations in a cell drops by at least 50%, and we have seen it go down as much as 90%.

That corresponds to what we have seen, too.

(Can’t this be done even with a high percentage of defectives? No, because it makes real one-piece-flow quite inefficient – all those defective parts have to be put aside, and workers stay idle and lose the pace.)

3. When error proofing comes into play

Some mistakes are still possible, but most have already been eliminated. The next step would be to think of error-proofing devices.

For example, the author gives an example where the operator pushes a part forward without filling it, by mistake. A weight checking (and alert) system could be added to the oil dispenser, to eliminate that failure mode.

Some of our consultants have managed assembly workshops in the automotive industry and other verticals. They are a strong proponent of process layout changes and error proofing because they saw first-hand that it works.

The author writes:

Mistake-proofing is in fact to quality assurance as kanbans are to production control: an innovative and powerful tool, but only a component of a comprehensive approach that must also handle inspections, testing, failure analysis/process improvement, emergency response, and external audits.

There you go! It makes sense where there is a need for it.

4. Adapting your quality improvement approach to the situation

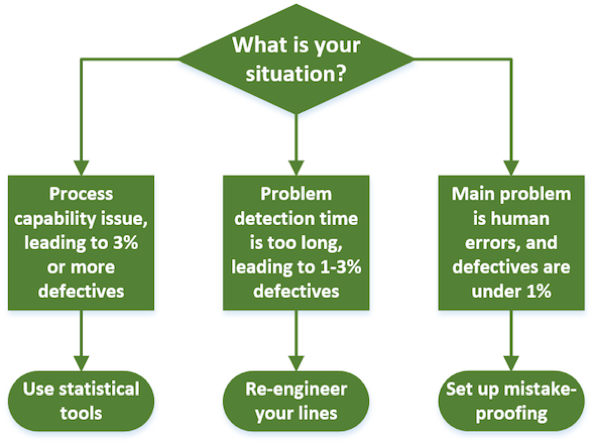

The author suggests the following approach. (Proportions of defectives should be taken as orders of magnitude, not as precise delimitations.)

One can think of many exceptions. For example, a fully automated line might not need mistake-proofing systems. (Or does it? Don’t the vision systems necessary for recognizing and picking parts also play that role?)

And yet, it strikes me as an excellent rule of thumb.

What an irony. The aim of the Six Sigma movement was to reduce the proportion of parts out of tolerance to nearly nothing by reaching a capability index (Cp) of 2.

%20of%202.png?width=334&name=capability%20index%20(Cp)%20of%202.png)

If you pick up a book about Six Sigma, it is full of statistical tools. And yet, in most industries with relatively simple or mature processes, it’s not the statistics that help drive the proportion of defective products to such a low level.

Does this make sense to you? What quality improvement approach has worked well for you? What has your experience been with the 3 approaches listed in this article? Share a comment below, and we’ll be sure to respond!